However, this is not based on the cost of the query during the initial version of Hive.ĭuring later versions of Hive, query has been optimized according to the cost of the query (like which types of join to be performed, how to order joins, the degree of parallelism, etc.). Hive optimizes each query's logical and physical execution plan before submitting for final execution. Create Table Employee_Part(EmloyeeID int, EmployeeName Varchar(100),Ĭlustered By (EmployeeID) into 20 Buckets Hive Partition is further subdivided into clusters or buckets and is called bucketing or clustering. The Hive table is divided into a number of partitions and is called Hive Partition. SET .mode = nonstrict Import Data From Temporary Table To Partitioned Table Insert Overwrite table Employee_Part Partition(City) Select EmployeeID,ĮmployeeName,Address,State,City,Zipcode from Emloyee_Temp Use Bucketing LOAD DATA INPATH '/home/hadoop/hive' INTO TABLE Employee_Temp Create Partitioned Table Create Table Employee_Part(EmloyeeID int, EmployeeName Varchar(100),Įnable Dynamic Hive Partition SET = true Create Temporary Table and Load Data Into Temporary Table Create Table Employee_Temp(EmloyeeID int, EmployeeName Varchar(100), Instead of querying the whole dataset, it will query partitioned dataset. With partitioning, data is stored in separate individual folders on HDFS. ORC supports compressed (ZLIB and Snappy), as well as uncompressed storage. Select * from Employee_Details Insert into Employee_Details_ORC Select a.EmployeeID, a.EmployeeName, b.Address,b.Designation from Employee_ORC a Select * from Employee Insert into Employee_ORC Ĭreate Table Employee_Details_ORC (EmployeeID int, Address varchar(100) STORED AS ORC tblproperties("compress.mode"="SNAPPY") Create Table Employee_ORC (EmployeeID int, EmployeeName varchar(100),Age int) Converting this table into ORCFile format will significantly reduce the query execution time.

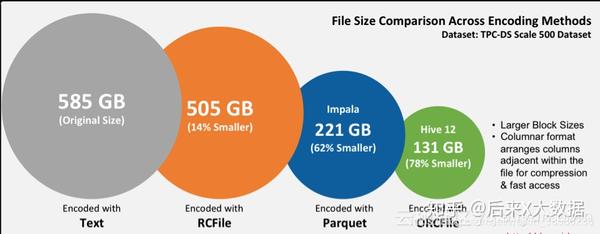

Select a.EmployeeID, a.EmployeeName, b.Address,b.Designation from Employee aĪbove query will take a long time, as the table is stored as text. Let's say we will use join to fetch details from both tables. It uses techniques like predicate push-down, compression, and more to improve the performance of the query.Ĭonsider two tables: employee and employee_details, tables that are stored in a text file. The ORCFile format is better than the Hive files format when it comes to reading, writing, and processing the data. Optimized Row Columnar format provides highly efficient ways of storing the hive data by reducing the data storage format by 75% of the original. Vectorization can be enabled in the environment by executing below commands. It improves the performance for operations like filter, join, aggregation, etc. Vectorization improves the performance by fetching 1,024 rows in a single operation instead of fetching single row each time. Tez engine can be enabled in your environment by setting to tez: set =tez Use Vectorization Tez improved the MapReduce paradigm by increasing the processing speed and maintaining the MapReduce ability to scale to petabytes of data. Use Tez EngineĪpache Tez Engine is an extensible framework for building high-performance batch processing and interactive data processing. Hive provides an SQL-like interface to query data stored in various data sources and file systems. ERROR WorkerSinkTask Task is being killed and will not recover until manually restarted (.runtime.WorkerTask:180) INFO Member connector-consumer-ds2_sink_book_pnlversion_v1-0-d2197886-cf74-4af6-8be9-d7d74f7b3a06 sending LeaveGroup request to coordinator 9092 (id: 2147483647 rack: null) (.:879) INFO Publish thread interrupted for client_id=connector-consumer-ds2_sink_book_pnlversion_v1-0 client_type=CONSUMER session= cluster=NV4qXOVlRtOAedA45AHcXg group=connect-ds2_sink_book_pnlversion_v1 (io.Apache Hive is a data warehouse built on the top of Hadoop for data analysis, summarization, and querying. Probably caused by an error thrown previously. Error: java.io.IOException: The file being written is in an invalid state. allocated memory: 64 (.InternalParquetRecordWriter:160) Facing issues when format.class used with parquet data format.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed